Google Ads defaults to data-driven attribution. Meta claims credit for anyone who saw an ad in the last 7 days. GA4 uses a model that changes depending on your conversion window. Your client sees three different numbers for the same conversion and asks which one is right.

None of them are right. They're all models — simplifications of a messy reality. The question isn't which model is correct; it's which model leads to better decisions for this specific client.

The six models agencies actually encounter

Last-click attribution

100% of credit goes to the final touchpoint before conversion.

Where you'll see it: GA4 (some reports), most CRM tools, default Shopify analytics.

The trade-off: Easy to explain, easy to optimize against, and completely blind to everything that happened before the last click. A client running brand awareness on Meta will see zero attributed conversions while their Google Ads "brand" campaign gets all the credit for the interest Meta created.

Use it when: The client has a short sales cycle (e-commerce impulse buys), runs single-channel campaigns, or needs a simple story for stakeholders who won't engage with nuance.

First-click attribution

100% of credit to the first touchpoint in the journey.

The trade-off: Good for understanding which channels fill the top of funnel. Terrible for optimizing conversion. A blog post that introduced a user six months ago gets full credit for a purchase driven by a retargeting ad yesterday.

Use it when: The client's primary goal is awareness or market entry, and they need to justify spend on content, social, or display.

Linear attribution

Equal credit across every touchpoint.

The trade-off: No touchpoint is ignored, but no touchpoint is prioritized either. If a customer had 12 interactions, each gets 8.3% credit. That's mathematically fair and strategically useless — it doesn't help you decide where to spend the next dollar.

Use it when: You genuinely don't know which channels matter and need a baseline before running incrementality tests.

Time-decay attribution

More credit to touchpoints closer to conversion, less to earlier ones.

The trade-off: Aligns with recency bias in real buying behavior. The decay rate is arbitrary — GA4's default half-life is 7 days, but your client's consideration window might be 3 days or 30. If the decay doesn't match the actual buying cycle, the model distorts more than it reveals.

Use it when: The client has a defined consideration period (2–4 weeks for SaaS, days for e-commerce) and you can tune the decay window to match.

Position-based (U-shaped) attribution

40% to first touch, 40% to last touch, 20% split across the middle.

The trade-off: Recognizes that discovery and conversion matter most. Middle interactions — the email nurture, the comparison blog post, the retargeting ad — get compressed into a small slice. For clients with long, complex journeys, that middle 20% might contain the interactions that actually moved the needle.

Use it when: The client runs both brand and performance campaigns and wants a model that respects both without ignoring the journey between them.

Data-driven attribution

Machine learning assigns credit based on statistical contribution of each touchpoint to actual conversions.

The trade-off: The best model in theory. In practice, it requires volume — Google recommends 3,000+ conversions per month for reliable results. Below that threshold, the model doesn't have enough signal and produces noisy, unstable results. Most SMB clients don't hit this bar.

Use it when: The client has high conversion volume (3,000+/month), tracks consistently across channels, and your team can validate the outputs against business intuition.

Attribution models at a glance

The real problem: platform disagreement

Attribution models are the theory. The practice is messier.

Meta counts a conversion if someone saw an ad and converted within 7 days — even if they never clicked. Google Ads counts the click. GA4 counts the session. For the same purchase, you'll see it attributed to three different channels in three different dashboards.

This isn't a bug. Each platform is incentivized to claim credit. Your job as an agency is to pick one source of truth for reporting, explain why, and be consistent.

A practical approach:

- Use GA4 as the single source for cross-channel reporting

- Use platform-native attribution (Google Ads, Meta) for in-platform optimization

- Never add up conversions across platforms — you'll double-count

How to explain attribution to clients

Most clients don't need to understand decay functions or Shapley values. They need to understand three things:

- The number depends on how you count. Different tools count differently. That's normal.

- We picked the model that fits your business. Here's why. (One sentence.)

- Here's what it means for where we spend. This channel is working. This one isn't. Here's what we're changing.

If you can say those three things with specific numbers behind each one, attribution becomes a strategic conversation instead of a technical argument.

When to change a client's attribution model

Most agencies pick an attribution model during onboarding and never revisit it. That is a mistake. The model that made sense when a client was spending $5,000/month on a single channel may actively mislead when they are spending $50,000 across four channels two years later.

Three signals tell you the current model is wrong:

Budget decisions consistently feel misaligned with results. You increase spend on the channel that gets the most attributed conversions, but total revenue doesn't move. Or you cut a channel that looked weak on paper, and conversions drop across other channels a month later. When optimization based on the model's output repeatedly produces unexpected results, the model is pointing you in the wrong direction.

The client's sales cycle has changed. A client that started as a direct-to-consumer e-commerce brand with 2-day purchase cycles may have expanded into B2B wholesale with 30-day consideration windows. Last-click attribution worked for the first business. It is actively harmful for the second. Similarly, a lead gen client that moves to e-commerce needs a model that credits the conversion event, not just the form fill that used to be the end of the funnel.

Conversion volume has crossed the data-driven threshold. If a client was running at 500 conversions per month when you set them up on position-based, and they now consistently hit 3,000+, data-driven attribution becomes viable. The machine learning model will outperform any rules-based approach at that volume — but only at that volume. Check the trailing 90-day average before switching, not a single strong month.

When you decide to switch, be transparent with the client. Show them a side-by-side comparison of the old model and the new model applied to the same data, covering at least 60 days. Explain that the numbers will shift — some channels will look stronger, others weaker — and that this reflects a more accurate picture, not a change in actual performance. Clients who understand the "why" before the numbers change will trust the new model. Clients who see their top channel suddenly drop 30% in attributed conversions without warning will not.

The best time to revisit is during quarterly business reviews. Pull the current model's output alongside an alternative and ask: does the model's story match what we're seeing in actual revenue? If the answer is no, it is time to change. If you are evaluating tools to help surface these patterns automatically, the conversational analytics evaluation guide covers what to look for.

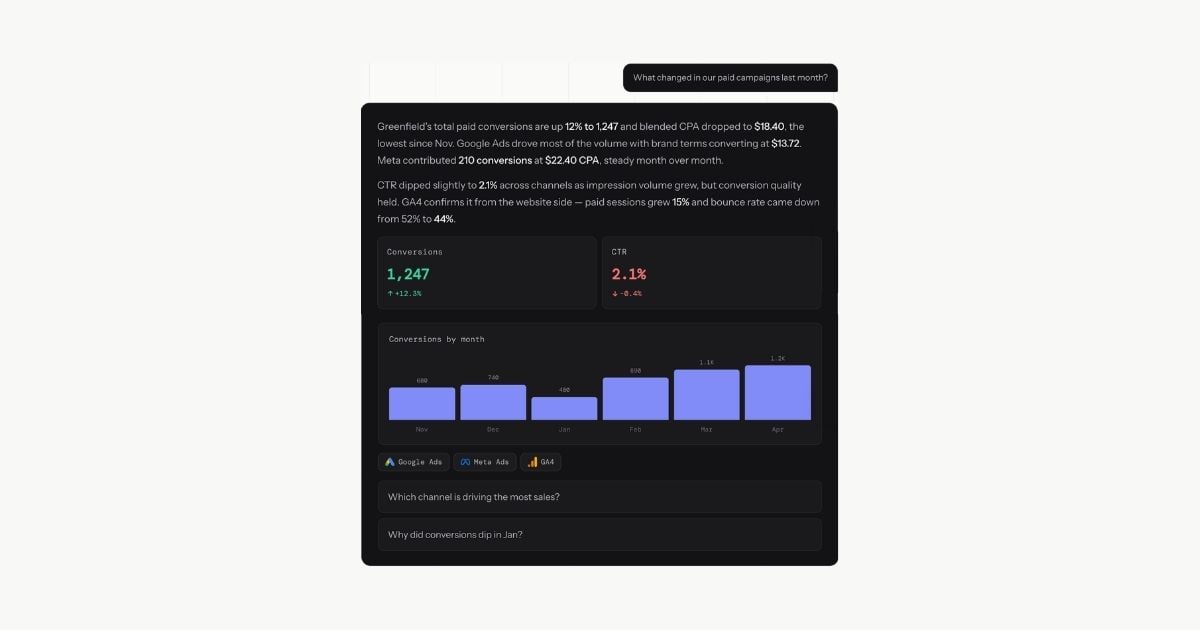

Where LDOO fits

LDOO doesn't replace your attribution platform. It reads the data those platforms produce and explains what it means.

Ask "which channels drove conversions last month?" and LDOO pulls from your connected sources — GA4, Google Ads, Meta — and returns a specific answer: "Paid search drove 47% of conversions at a CPA of $28.40, down from $34.10 last month. Meta contributed 31% but CPA increased 18%."

That's the explanation your client report needs. Not a debate about which attribution model is more philosophically correct — a clear answer about what happened and what to do next.

For a broader look at how conversational analytics fits into agency workflows beyond attribution, see conversational analytics for marketing agencies. The reporting workflow guide covers how these answers become branded client reports.

The attribution model matters. The explanation matters more.